It is no longer a silent rivalry as the race to become the world’s most trusted data platform continues. As enterprises invest in cloud infrastructure at an unprecedented rate, the amount of money businesses spend on public cloud services will increase 21.5% year over year. – The choices businesses make in and around their data platforms have never had as much weight attached to them. The focal point in this discussion is one of the most controversial data-matchups in the data world: Snowflake vs Databricks. Both are powerful. Both are enterprise-grade. But their construction is very unlike each other, and it may be worth it to you more than money to choose one that is not built in the way you intend.

What Are These Two Platforms Really?

To get an idea of where the Snowflake vs Databricks debate gets deep before we go into what each platform set out to actually do, as their origins explain much about where they shine and where they falter.

Snowflake is an entirely cloud-native data warehouse, which is built from the ground up. It came with a brash concept: decouple compute and storage, allow them to scale independently, and enable SQL-based analytics to be cross-cloud-provider. To report business intelligence, to query structured data fast and securely, and to share data across organizations, Snowflake soon became the preferred option. Its multi-cluster warehouses enable multiple workloads to be operated at a time without contention for resources, which makes it especially appealing to BI-intensive enterprises.

Databricks, however, branched off of Apache Spark. Its founders, most of whom were researchers at UC Berkeley, desired to create a platform where data engineering, data science, and machine learning solutions were all brought under one roof. They referred to this as the “lakehouse” model, which combines the flexibility of a data lake with the structure of a warehouse. Assuming you are engaged with a complex ETL process, predictive analytics in BI, or you are building and deploying machine learning models on scale, Databricks was designed with you in mind.

When people refer to Snowflake vs Databricks, they are not actually comparing apples to apples. They are juxtaposing an analytical engine that is highly precise with a multi-purpose data platform. The correct decision will solely be based on what actually needs to be done with the data of a business.

Snowflake vs Databricks Architecture: Where the Real Differences Begin

The architecture differences between Snowflake vs Databricks are likely to be the most critical aspect to consider before making any decision.

The architecture of Snowflake is so simple and graceful in its simplicity. It separates storage (data is stored in cloud object stores such as S3 or Azure Blob) and compute (virtual warehouses are used to execute queries). This decoupling implies that you can scale compute up or down without affecting your data storage, and multiple teams can query the same data at the same time using independent compute clusters without slowing each other down. It is what makes Snowflake so reliable when it comes to business intelligence transformation and workloads that require high concurrency.

Databricks has another method. It is constructed over the top of Delta Lake, an open-source storage layer that introduces ACID transactions to data lakes. The platform is highly scalable to large-scale data processing, complex ETL operations, and training machine learning models on large-scale data. The tradeoff? It takes additional engineering skills to install and operate effectively.

One useful consideration to make in the Snowflake vs Databricks architecture comparison is as follows:

- Snowflake = Cloud data warehouse designed to be used by analysts and BI teams who are SQL-literate.

- Databricks = A single data and AI platform designed to enable engineers and data scientists to do far more than query.

Performance and Speed: Who Wins Where?

In a Snowflake vs Databricks performance comparison, each of the platforms outshines the other in all aspects. They all have their sweet spot.

Where Snowflake leads:

- Parallel SQL queries over structured data.

- Real-time analytics dashboards fed with real-time data streams.

- BI reporting using tools such as Tableau, Looker, and Power BI.

- Share data safely between internal teams and external partners- without transferring or duplicating data.

Where Databricks leads:

- Large-scale batch processing and complicated ETL processes that entail unstructured or semi-structured information.

- Machine learning solutions – training models to run at scale.

- Apache Spark Structured Streaming is used to streamline analytics and real-time data pipelines.

- AI development services using fine-tuning models, feature engineering, and experiment tracking using MLflow.

This is the reason why the Snowflake vs Databricks question is not necessarily either/or. Most enterprises, in fact, operate the two platforms in parallel: Snowflake to handle their structured analytics and reporting layer, with Databricks running the heavier data science and engineering workflows beneath.

Use Cases: Matching the Platform to the Problem

Let’s get specific. The case of Snowflake vs Databricks usually runs its course in various business scenarios:

Choose Snowflake if your team needs to:

- Provide quick, trustworthy business intelligence reporting to non-technical stakeholders.

- Orchestrate structured data on many cloud environments with little operational overhead.

- Make data sharing across departments or with outside vendors secure.

- Scale analytics loads applications without controlling the underlying infrastructure.

- Multi-cluster warehouses support multiple reporting on large teams at the same time.

Choose Databricks if your team needs to:

- Develop and implement machine learning applications and AI models into production.

- Process high-volume and mixed-format data in IoT devices, logs, or event streams.

- Perform complex and multi-step ETL processes on raw and processed data.

- Provide the capability to deliver AI decision-making capabilities using custom model pipelines.

- Engineer scale data engineering services at petabytes of diverse data.

Consider both if your organization needs to:

- The change in support of business intelligence transformation at the reporting layer, as well as investing in AI development services.

- Combine structured analytics with advanced predictive analytics in BI

- Create a unified data strategy to support operational and experimental workloads.

Data Engineering and Integration

The Snowflake vs Databricks comparison is especially interesting in the area of data engineering services.

Databricks were virtually constructed to suit data engineers. It can cope with even the most problematic real-world data pipelines in relative grace, with native support of Python, Scala, R, and SQL, and with close integration to Delta Lake, dbt, and Apache Kafka. The Databricks team serves data engineers and analysts who need to work at raw, bronze, silver, and gold data levels, and feel much more at home here.

Snowflake has also come far in terms of data engineering functions as well. Its continuous data loading capability (snowpipe), change data capture capability (Streams and Tasks), and its dynamic tables, which provide change logic, make it a surprisingly powerful choice to teams who want to keep their data engineering within the same platform they are using to perform analytics. But in very complex, code-intensive ETL processes, Databricks continues to outshine.

Security, Governance, and Compliance

Both platforms are serious about data governance, however, in various aspects.

The way Snowflake achieves data sharing security is truly innovative. It enables organizations to distribute live, controlled information to outsiders without physically duplicating or transporting information. Snowflake is a powerful option when it comes to the highly regulated sectors, such as finance and healthcare.

Databricks offers governance as Unity Catalog, its single data governance layer, which provides fine-grained access controls, data lineage, and audit logging across all assets in the lakehouse. In organizations with machine learning solutions at scale, Unity Catalog can be used to ensure that model data, as well as model features and pipelines, are tracked and auditable.

Simplicity and ease of management for SQL users favour Snowflake in the Snowflake vs Databricks security debate. Databricks beats engineering-friendly depth and flexibility when working with a variety of assets.

Pricing: What You Actually Pay

It is in pricing that the Snowflake vs Databricks comparison becomes tricky, and in many instances, organizations are taken by surprise.

Both systems are based on consumption-based pricing, that is, you pay what you use and not a fixed license. Snowflake bills by compute credits used by virtual warehouses, and storage is billed independently. Databricks is billed as Databricks Units (DBUs) with varying rates depending on the compute tier and type of workload.

A few things to keep in mind:

- The cost of snowflake scales can easily increase with the high-concurrency query workloads unless warehouses are carefully sized and automatically suspended.

- The management of Databricks clusters can be complicated, and improperly configured clusters may cause unforeseen expenses in cloud infrastructure.

- Both platforms provide cost optimization tools; however, they need a conscious effort to be effectively used.

- To new organizations that are just beginning their use of cloud data warehouse capabilities, a per-query pricing model is sometimes easier to predict and manage.

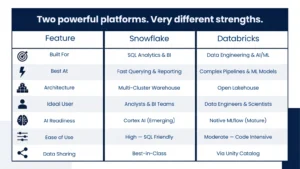

Snowflake vs Databricks: A Side-by-Side Snapshot

Which One Is Right for Your Business?

The following is a candid overview of the Snowflake vs Databricks ruling after examining the two sites critically:

When your organization is less about reporting and more about transforming business intelligence and delivering clean analytics to your stakeholders who think in dashboards and SQL, Snowflake should be a more natural home. Onboarding is easier, governing is easier, and it is designed to enable analysts to be productive in a short time.

When your organization is investing in machine learning solutions, developing data products, or running more complex AI decision-making pipelines, Databricks provides you the horsepower and flexibility to, in fact, get that work done on a large scale.

And if you are developing an enterprise data strategy that encompasses data ingestion to production AI, the Snowflake vs Databricks debate may have to be answered honestly, in which case, you may need both.

Conclusion

The Snowflake vs Databricks debate does not have a single winner, and it never will — because both platforms are genuinely excellent at different things. Snowflake is the gold standard for cloud data warehouse analytics, secure data sharing, and business intelligence reporting. Databricks leads when the work gets heavier: ETL processes, machine learning solutions, and AI decision making at enterprise scale. The smartest organizations are not picking a side — they are building data strategies that leverage the right tool for the right job. If you need guidance on where to start or how to build that strategy, AnavClouds Analytics.ai brings the expertise to help you move forward with confidence — from data platform selection to full-scale AI development services and beyond.

FAQs

1. What is the main difference between Snowflake and Databricks?

Snowflake is a cloud data warehouse that is oriented towards structured data analytics and business intelligence reporting. Databricks is a single data and AI platform designed to support data engineering, machine learning solutions, and large-scale ETL processes. In the Snowflake vs Databricks comparison, the fundamental difference lies in the users of each platform, namely analysts with Snowflake and engineers and data scientists with Databricks.

2. Is it possible to use Snowflake and Databricks together?

Yes, and there are a lot of enterprises that precisely do so. Snowflake vs Databricks is not necessarily a yes/no situation. Databricks is commonly used by organizations as a data engineering service and machine learning workflows, and feeds processed data into Snowflake to drive business intelligence reporting and stakeholder-facing analytics.

3. Which platform is more suitable to real-time analytics?

Both platforms have an option of having real-time analytics, but in a different manner. Snowflake supports Snowpipe and Dynamic Tables to facilitate almost real-time data ingestion and querying. Apache Spark Structured Streaming is used in Databricks to handle real streaming workloads. In the Snowflake vs Databricks comparison of real-time analytics, Databricks tends to be more responsive to high-throughput streaming pipelines, whereas Snowflake would be more responsive to real-time query of structured, already ingested data.

4. Is Snowflake or Databricks more cost-effective?

There is much dependence on the type of workload and cost. The credit-based pricing of Snowflake is typically more predictable for the SQL analytics teams. Data engineering services and machine learning solutions at scale may be more cost-effective on Databricks, but cluster management must be taken into consideration to prevent runaway costs. In the Snowflake vs Databricks cost-comparison, neither platform is always cheaper: the platform that is more economical will usually be the one that is right to use based on the workload.